False information travels quickly across social media platforms. From election rumours to public health myths, misinformation can spread through online networks faster than factual corrections. Technology companies have experimented with several solutions, including removing posts, reducing algorithmic amplification, and attaching warning labels. Yet a fundamental question remains unresolved. Can simple transparency mechanisms discourage the creation of misinformation before it spreads?

A recent study led by Prof. Tao Li from City University of Hong Kong offers a new perspective on this problem. Published in IEEE Transactions on Network Science and Engineering, the research titled Transparent Tagging for Strategic Social Nudges on User Generated Misinformation investigates how social media platforms can design tagging policies that influence both audiences and content creators. The study develops a mathematical framework to analyse how warning labels affect online discussions and how those discussions shape the behaviour of content producers.

The research suggests that a simple strategy may be more powerful than previously assumed. When platforms openly disclose the outcome of their misinformation detection systems, even when those systems are imperfect, the resulting social responses can encourage creators to produce more authentic content.

Why misinformation remains difficult to control

Social media platforms depend heavily on user-generated content. Unlike traditional news organisations, these platforms do not employ most of the individuals who create posts, videos, or comments. This means the companies hosting the platforms have limited direct control over the accuracy of what is published.

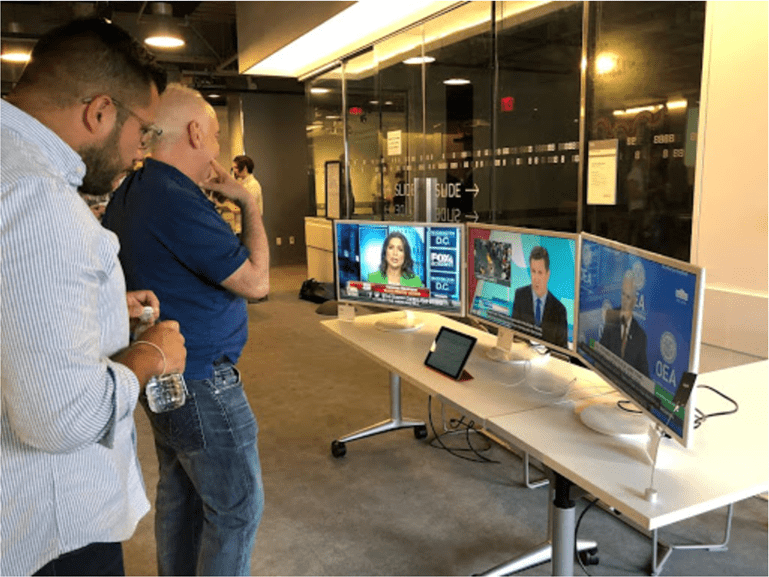

The consequences of this model have become increasingly visible. During the 2016 United States presidential election, misleading stories about political candidates circulated widely online. Similarly, during the COVID-19 pandemic, social media platforms hosted a wave of misinformation related to vaccines, mask policies, and virus transmission. Such misinformation can have significant social and political consequences, especially when it spreads through large online networks.

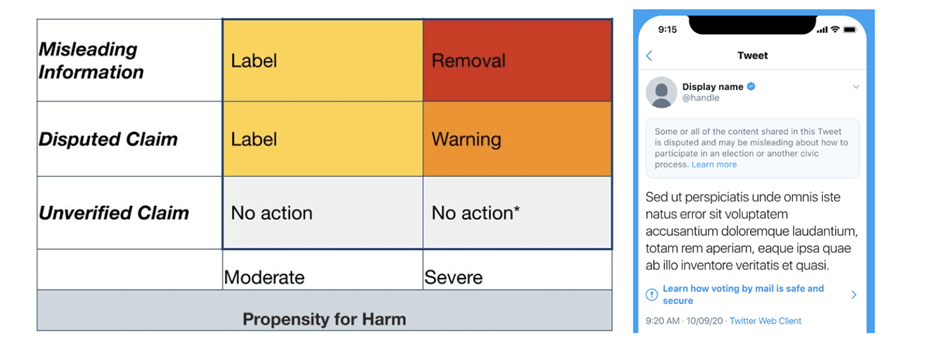

Platforms have attempted to address this issue through several forms of moderation. These measures include removing misleading posts, suspending accounts that repeatedly share false information, and reducing the visibility of problematic content within recommendation algorithms. Another widely adopted approach involves attaching labels or warnings that alert users to the possibility that a piece of content may be misleading.

Modelling the social media ecosystem

To address this problem, the researchers developed a theoretical model that captures the strategic interactions among three key actors in the social media environment. These actors are the platform itself, the content provider who creates the post, and the users who consume and react to that content.

In the model, the social network platform acts as a sender of information signals. It observes a post through an automated detection system and adds a tag indicating whether the content appears authentic or misleading. However, the detection process is not perfect. Artificial intelligence models and human fact-checking both introduce the possibility of classification errors.

The content provider represents the individual who creates the original post. According to the model, this individual chooses how much effort to invest in ensuring the accuracy of the information they share. Producing reliable content requires effort, while spreading misleading claims may require little verification. The provider must therefore balance the cost of effort against potential reputational consequences.

The third actor is the audience of social media users. After seeing the tag attached to a post, users decide whether to respond positively or negatively. Their comments and reactions then influence how widely the post spreads and how it is perceived within the network.

Understanding the role of social nudges

A central concept within the study is the idea of a social nudge. On social media platforms, individual reactions to posts accumulate into collective trends. When a post receives many negative comments or critical responses, it can damage the reputation of the person who shared it. Conversely, positive responses can enhance credibility and influence.

The researchers modelled this dynamic using a statistical approach known as a branching process, which is commonly used in fields such as epidemiology and network science. In this context, each user who shares a post may influence several others, creating a cascade of interactions across the network.

Within the model, users can leave either positive or negative comments. The proportion of negative responses represents the collective sentiment toward a particular post. As more people react, this proportion stabilises and reflects the content’s overall reputation.

When detection systems make mistakes

An important aspect of the study is the recognition that misinformation detection systems are imperfect. Artificial intelligence models can misclassify posts, and even expert fact checkers may disagree about the accuracy of complex claims. These errors introduce uncertainty into any tagging system.

Using equilibrium analysis within the Bayesian persuasion framework, the researchers derived the optimal platform strategy. The analysis examined how different tagging policies influence the behaviour of both users and content creators, accounting for the cost of producing accurate content and the reputational impact of negative feedback.

The results produced a striking conclusion. Even when detection systems are imperfect, the optimal policy is transparent tagging. In other words, platforms should openly display the detection outcome rather than concealing uncertainty.

Combating misinformation isn’t just a battle for platforms, governments, or law enforcement. The true guardians of information integrity should be the people. When platforms provide transparent and honest tagging, it triggers a collective response from the mass acting as a social nudge that forces content creators to prioritize authenticity and makes the production of misinformation fundamentally unprofitable.

—Tao Li

Implications for platform governance

The findings carry important implications for the governance of digital platforms. Debates about misinformation often focus on improving detection technologies, particularly through advances in artificial intelligence and machine learning. While such improvements remain important, the study indicates that transparency can be a powerful policy tool in its own right.

By revealing how their detection systems classify posts, platforms enable users to participate more actively in evaluating information. The resulting discussions create a form of distributed oversight that shapes content providers’ reputations.

The research also highlights the importance of considering behavioural incentives when designing moderation policies. Content creators respond not only to platform rules but also to their audiences’ social reactions. Policies that influence those reactions can therefore alter the incentives that drive the production of information.

Reference

Yang, Y-T., Li, T., & Zhu, Q. (2026). Transparent tagging for strategic social nudges on user generated misinformation. IEEE Transactions on Network Science and Engineering. https://doi.org/10.1109/TNSE.2025.3591983