In a world where artificial intelligence is beginning to write code, compose music, and even diagnose diseases, a new question is quietly emerging inside hospitals and universities. Can machines also teach surgeons how to operate? And more importantly, can they do it safely, effectively, and at scale?

A recent study led by Yves Shabo Nkulu-Ily at the University of Kentucky, published in Interactive Learning Environments, explores exactly this possibility. The research introduces a new framework that combines extended reality and artificial intelligence to simulate surgical decision-making in immersive environments. It is not just about visual simulation. It is about replicating how surgeons think, adapt, and refine their skills over time.

The hidden crisis in surgical training

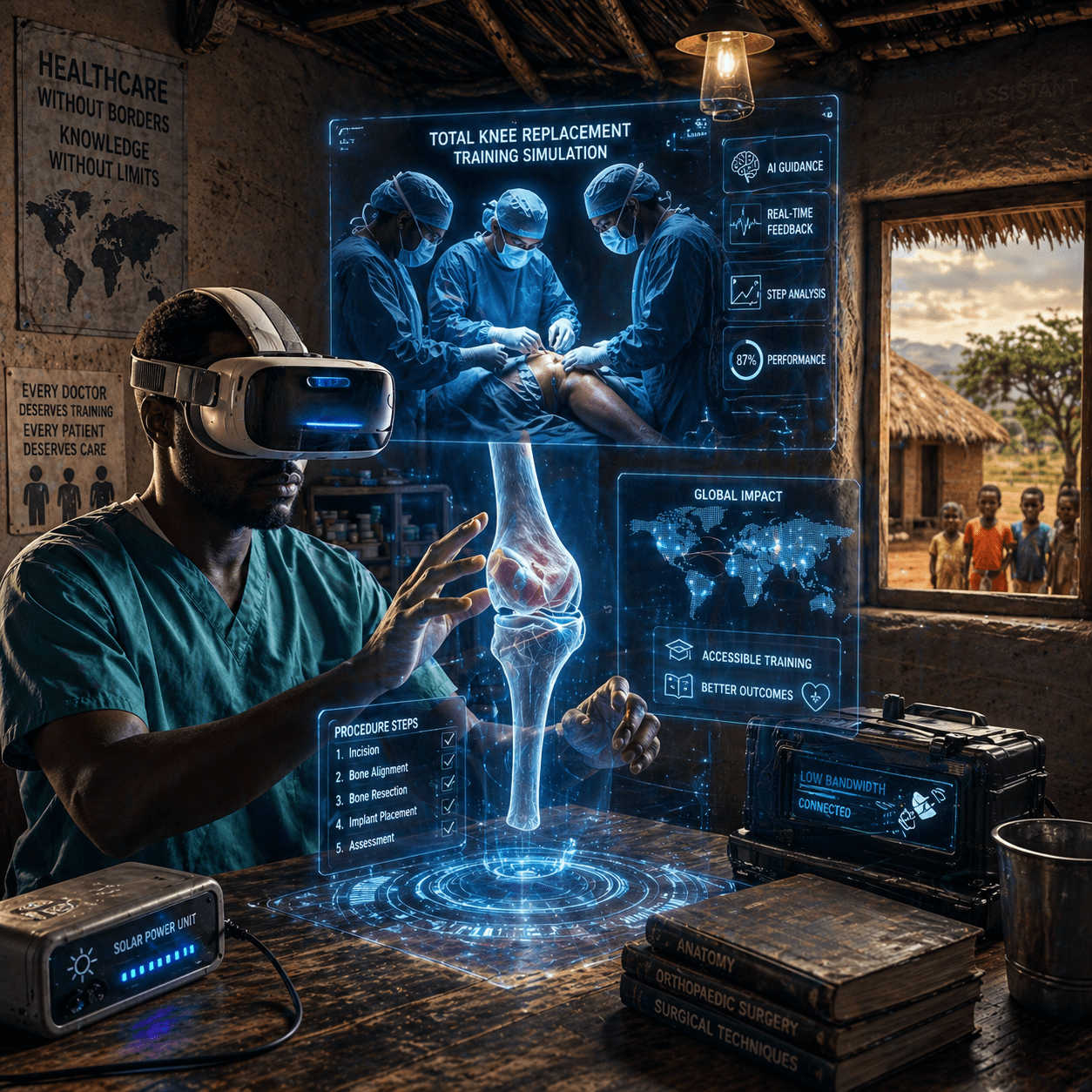

Surgical training is expensive, time-intensive, and deeply dependent on access to mentors and infrastructure. In many parts of the world, trainees simply do not have the opportunity to practice complex procedures under expert supervision.

Traditional simulation tools have attempted to address this gap, but they often fall short. Many systems focus on mechanical repetition rather than cognitive decision-making. Others require high-end infrastructure, making them inaccessible in low-resource settings.

The study recognises this gap and positions itself within a broader global problem. If access to high-quality training remains uneven, so too will the quality of healthcare outcomes. The stakes are not academic. They are directly tied to patient safety and surgical success.

Inside the virtual operating room

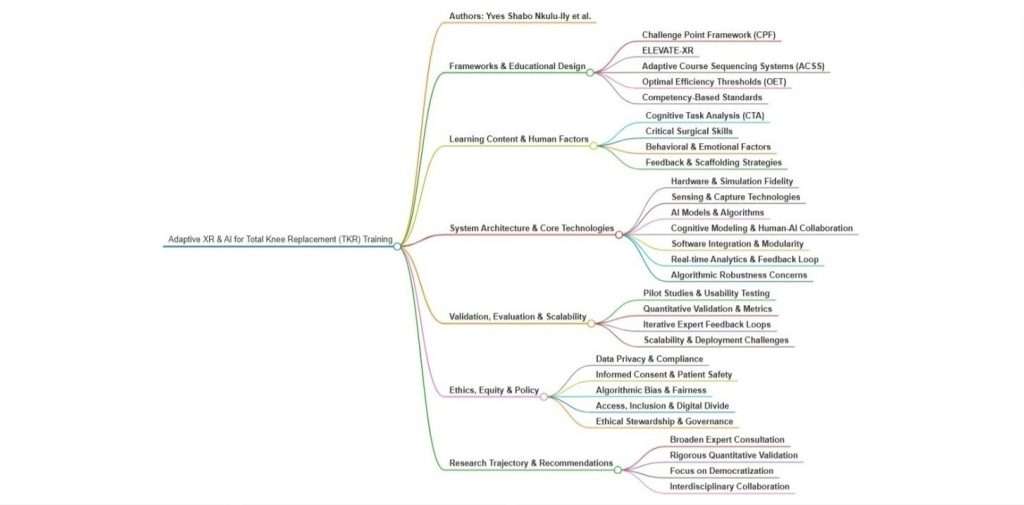

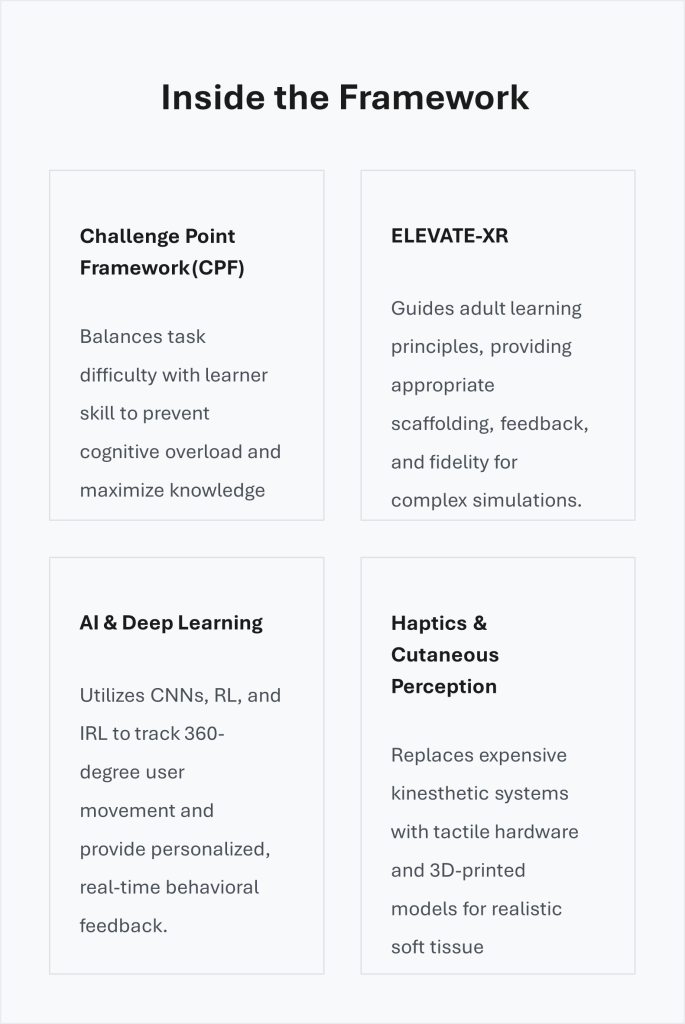

The mind map in Figure 1 showcases an exciting way to blend XR and AI, offering an innovative pedagogical approach for delivering meaningful feedback in surgical distance education, especially in resource-limited areas with unreliable internet. It highlights how to effectively capture essential characteristics of tasks and environments, ensuring realistic and engaging learning experiences. Let’s embrace this opportunity for transformative education!

Why does this matter?

Geography, institutional budgets, and internet infrastructure severely limit global access to specialized surgical training. By designing an XR/AI system that accommodates low-bandwidth environments and leverages cost-effective 3D-printed physical models, this research provides a scalable blueprint for training medical professionals in underserved regions without sacrificing the tactile realism required for patient safety.

Orthopedic surgery, particularly intricate procedures like Total Knee Replacement (TKR), requires a masterful touch, split-second decision-making, and years of hands-on practice. But how do you teach that mastery when the student and the operating table are miles apart?

The historical challenges of distance education in clinical settings are profound. Trainees often lack immediate, actionable feedback, receive inadequate behavioral training, and lack realistic tactile sensations essential for soft-tissue management. Now, a groundbreaking integration of extended reality (XR) and artificial intelligence (AI) is actively bridging that gap, offering an innovative pedagogical approach that is especially vital in resource-limited areas with unreliable internet access.

The challenge point framework & adaptive learning

At the heart of this research is an adaptive XR training system that dynamically adjusts the functional task difficulty using the Challenge Point Framework (CPF). The CPF is a sophisticated pedagogical model designed to balance task difficulty with the learner’s specific skill level. By doing so, it optimizes learning outcomes, actively prevents cognitive overload, and maintains deep engagement through appropriately challenging tasks.

To support this, the ELEVATE-XR framework guides the overarching design of these learning experiences. This framework complements the CPF by providing detailed guidance on XR forms, learning activities, and scenario elements, including complexity, feedback, and fidelity. Focusing heavily on adult learning principles, it provides detailed scaffolding appropriate to varying proficiency levels. When combined, these frameworks enable the creation of adaptive course sequencing systems (ACSS) that use AI to monitor performance and alter training scenarios in real time.

The power of haptics and 3D reality

Through rigorous cognitive task analysis, researchers identified the critical procedural steps essential for a successful TKR, emphasizing that visual simulation alone is insufficient. The research highlights the absolute necessity for realistic environments that replicate the precise tactile experience of surgery.

To achieve this, the system proposes a multi-sensory combination: Virtual Reality paired with physical 3D-printed models and haptic feedback devices. This innovative approach offers an exciting, flexible alternative to traditional, highly expensive kinesthetic force-feedback systems. By focusing on cutaneous perception, often underestimated in standard models, the system activates different body areas to deliver a rich, realistic variety of tactile feedback.

AI-driven analytics and human-AI alignment

At the technical core of this innovation are sophisticated AI algorithms incorporating both reinforcement learning (RL) and inverse reinforcement learning (IRL). These algorithms analyze learner behavior and motivation, allowing the system to provide dynamic, individualized feedback that fosters effective human-AI collaboration. Deep learning models, including convolutional neural networks (CNNs), work in tandem with high-speed videography to capture and analyze delicate surgical movements in real time.

Going deeper, the research also explores the integration of cognitive architectures, such as ACT-R, to simulate human cognition, decision-making, and skill acquisition. However, recognizing the limitations of generalizing ACT-R findings, researchers advocate for models that also factor in emotional variables such as learner motivation and fatigue.

By optimizing the system for low-bandwidth environments, the framework aims to improve accessibility in underserved regions, ultimately democratizing access to high-quality surgical education.

— Yves Shabo NKULU-ILY

Ethics, scalability, and democratization

Deploying advanced AI in medical education requires rigorous ethical stewardship. The study thoroughly addresses the need to protect personal health information under regulations like HIPAA and GDPR. Furthermore, it stresses the importance of securing informed consent and actively mitigating biases in AI algorithms to prevent systemic disparities in training.

Ultimately, the research tackles the digital divide head-on. By merging CPF with the Optimal Efficiency Thresholds (OET) framework, the combined model optimizes resource allocation and ensures systemic efficiency, even in low-bandwidth environments. The overarching goal is clear: democratize access to world-class surgical education, improve learner engagement, and significantly enhance future patient outcomes.

Current work and future directions:

- Exciting advancements in robotics and haptic technology are currently transforming how surgeons master soft tissue release remotely. Researchers are harnessing innovative algorithms complemented by high-speed videos and the CPF to recreate delicate sensations safely. A fantastic recent outcome of this initiative is a new competency design framework that empowers non-experts to develop cost-effective system architectures. Available at SSRN: https://ssrn.com/abstract=6568980 or http://dx.doi.org/10.2139/ssrn.6568980

- Building on these foundational studies, the latest efforts tackle the technical and sociological factors influencing personalized feedback in distance education. The focus remains steadfast on enhancing long-term memory retention, ensuring learner safety, and establishing a competency design framework that empowers non-experts to develop cost-effective, scalable system architectures. (study ref: [IRB # 111588 Exempt])

Reference

Nkulu-Ily, Y. S., Gantwerker, E. A., Vaughan, N., Cheng, Q. (Shawn), & Dhanda, J. (2025). Simulating realistic surgeon decisions and actions in immersive medical distance education with XR and AI. Interactive Learning Environments, 1–46. https://doi.org/10.1080/10494820.2025.2542893