Artificial intelligence is increasingly being woven into the fabric of modern decision making, from healthcare and finance to policing and criminal justice. But a growing body of research is beginning to question whether these systems are as neutral as they appear. A recent study suggests they may not be.

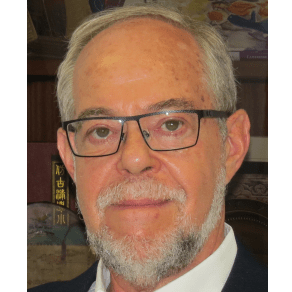

In a research note titled “The effect of prompt framing on AI-generated sentencing recommendations”, published in the journal Criminal Justice Studies, Professor Gustavo S. Mesch of University of Haifa explores how artificial intelligence systems respond to subtle changes in language and context. The findings raise important questions about bias, fairness, and the future role of AI in legal systems.

A simple experiment with complex implications

At the heart of the study lies a deceptively simple experiment. Six widely used AI systems were presented with the same criminal case scenario involving second degree aggravated assault. The details were carefully controlled to ensure consistency.

The offender was 28 years old, had no prior criminal record, and had been convicted based on clear evidence. The recommended sentencing range was between one and ten years, with an average of five years.

The experiment was then repeated with one crucial change. In one version, the offender was male. In the other, the offender was female. Everything else remained identical.

This controlled design allowed the researcher to isolate the effect of gender on AI generated sentencing recommendations. It also enabled a deeper examination of how prompt framing, or the way a question is asked, influences the outputs of large language models.

When wording changes outcomes

One of the central concepts in the study is prompt engineering, a technical process that involves designing inputs to guide AI responses. Drawing from framing theory, the research treats prompts as communicative acts that shape interpretation.

Three types of prompts were used. A neutral prompt asked the AI to recommend a sentence based on the facts. A punitive prompt emphasized the harm inflicted on the victim and the need for justice. A lenient prompt highlighted the offender’s lack of prior history and potential for rehabilitation.

Across most models, punitive framing led to harsher sentencing recommendations, whereas lenient framing led to lighter sentences or alternatives, such as probation.

This demonstrates that AI systems are highly sensitive to linguistic cues. Even when the underlying facts remain unchanged, the framing of a question can significantly influence the outcome.

Gender bias in AI sentencing

Perhaps the most important finding of the study relates to gender disparities. Despite identical case details, female offenders consistently received lower sentencing recommendations than male offenders.

In neutral conditions, male offenders were typically assigned sentences closer to the five- to seven-year range. Female offenders, by contrast, were more often assigned sentences between three and five years.

This pattern persisted across different AI models and prompt conditions. Even when punitive language was used, female offenders were generally treated more leniently.

The role of learned bias

In the field of Artificial Intelligence, bias is often understood as systematic distortions in outputs that favour certain groups or reinforce stereotypes.

Such biases can originate from several sources. Training data may contain historical inequalities or outdated assumptions. Algorithms may reflect the implicit preferences of their developers. Over time, these influences can shape how AI systems interpret and respond to information.

In this study, the consistent leniency towards female offenders aligns with the chivalry thesis in criminology. This theory suggests that women are often perceived as less dangerous and more deserving of leniency within the justice system.

The fact that AI systems replicate this pattern indicates that they are not simply neutral tools. Instead, they absorb and reproduce the biases embedded in the data and frameworks on which they are built.

Not all AI models behave the same

While the overall trends were consistent, the study also found notable differences between individual AI models. Some systems adhered closely to the expected patterns of punitive and lenient framing. Others showed more variability.

For example, certain models did not significantly increase sentencing under punitive prompts, suggesting that other factors within the prompt, such as the mention of no prior criminal history, may have carried greater weight.

In addition, some models demonstrated built-in ethical guardrails. In certain cases, they declined to provide specific judgments or numerical ratings, particularly when asked to assess threat levels.

These variations highlight an important point. AI systems are not interchangeable. Each model reflects different design choices, training data, and ethical constraints. As a result, their outputs can differ in meaningful ways.

The anchoring effect of numbers

Another intriguing finding relates to the influence of numerical anchors. The study provided an average sentence of five years as part of the prompt. This figure appeared to exert a strong gravitational pull on the AI responses.

Even when prompts were framed in strongly punitive or lenient terms, many models remained close to the five-year benchmark. This suggests that explicit numerical information can constrain the extent to which AI outputs are influenced by emotional or rhetorical framing.

This phenomenon is consistent with the concept of anchoring in behavioural science, where individuals rely heavily on initial reference points when making decisions. The fact that AI systems exhibit similar tendencies further underscores their resemblance to human cognitive processes.

Why this matters for the real world

The implications of these findings extend beyond academic interest. AI systems are already being used in various aspects of the criminal justice system, including risk assessment, predictive policing, and legal research.

If these systems are sensitive to framing and susceptible to bias, their use in high-stakes decision-making becomes a matter of concern.

For instance, if an AI tool consistently rates male offenders as more dangerous, it could influence decisions about sentencing, parole, or supervision. Over time, such patterns could contribute to systemic inequalities.

Similarly, the ability to influence AI outputs through prompt design raises questions about accountability. Who controls how questions are framed? And how transparent are these processes?

What happens when the facts stay the same, but the framing changes? This study is about how different prompt frames shape AI-generated sentencing recommendations across identical legal scenarios.

— Gustavo S. Mesch

AI as a mirror of human judgment

One of the broader insights from the study is that AI systems often reflect human patterns of thinking rather than transcending them. The sensitivity to framing, reliance on anchors, and gender bias all mirror well-documented aspects of human decision-making.

This challenges the notion of AI as an objective arbiter. Instead, it suggests that AI operates within the same cognitive and cultural frameworks that shape human judgment.

From this perspective, the goal should not be to eliminate bias, which may be unrealistic, but to understand and mitigate it. This requires careful attention to data, design, and deployment.

The need for careful oversight

The study by Gustavo S. Mesch contributes to a growing conversation about the ethical use of AI in society. By demonstrating how easily AI outputs can be influenced, it highlights the importance of rigorous testing and oversight.

As artificial intelligence becomes more integrated into everyday life, trust will be a key factor in its acceptance. Studies like this one play a crucial role in building that trust by revealing both the capabilities and the shortcomings of these systems.

The findings serve as a reminder that technology is not inherently neutral. Human choices, values, and assumptions shape it.

In the context of criminal justice, where decisions have profound consequences, this insight is particularly important. Ensuring fairness and accountability will require not only technical innovation but also ethical reflection.

Reference

Mesch, G. S. (2026). The effect of prompt framing on AI generated sentencing recommendations: A research note. Criminal Justice Studies. https://doi.org/10.1080/1478601X.2026.2624489