Artificial intelligence is already woven into everyday life. It helps us write, translate, diagnose disease, and navigate cities. Increasingly, it is also entering one of the most sensitive domains of all: mental health and wellbeing.

Here, the stakes are different. A system that misclassifies an image might cause inconvenience. A system that misunderstands distress, vulnerability, or trust can cause real, irreversible harm.

This raises a foundational question: can we design AI systems that are not just intelligent, but structurally safe, interpretable, and compassionate?

Most current approaches try to solve this by adding layers of ethics after the fact—rules, filters, or fine-tuning meant to rein in behavior. But we focus on another possibility: instead of adding care later, we could design AI systems so that care is built into the core from the start.

The safest AI is not controlled after it acts, but designed so that care guides every choice.

—Edouard Siregar

Surprisingly, one place to look for guidance is not psychology or philosophy alone, but physics.

The article titled “AI with Symbolic Empathy: Shannon-Neumann Insight Guided Logic” published in Cognitive Computing Journal by lead author Edouard Siregar proposes a symbolic AI architecture where an agent aligns a person’s current cognitive–emotional state with their ideal trajectory using a five-stage hierarchical reasoning process.

From physics to minds

For centuries, physicists have relied on a simple but powerful idea known as the least action principle. In plain terms, many physical systems evolve along paths that balance efficiency, stability, and constraints.

Human brains are physical systems too. They use electrical and chemical signals, consume energy, exchange information with the world, and adapt over time. Crucially, the brain is not a closed machine. It is an open system, constantly shaped by stress, learning, relationships, and care.

If we take this seriously, mental processes such as attention, emotion, and decision-making can be understood as dynamic processes.

The framework described here explores what happens when we design AI systems with the same philosophy: not blindly optimizing, but respecting structural limits that prioritize human well-being.

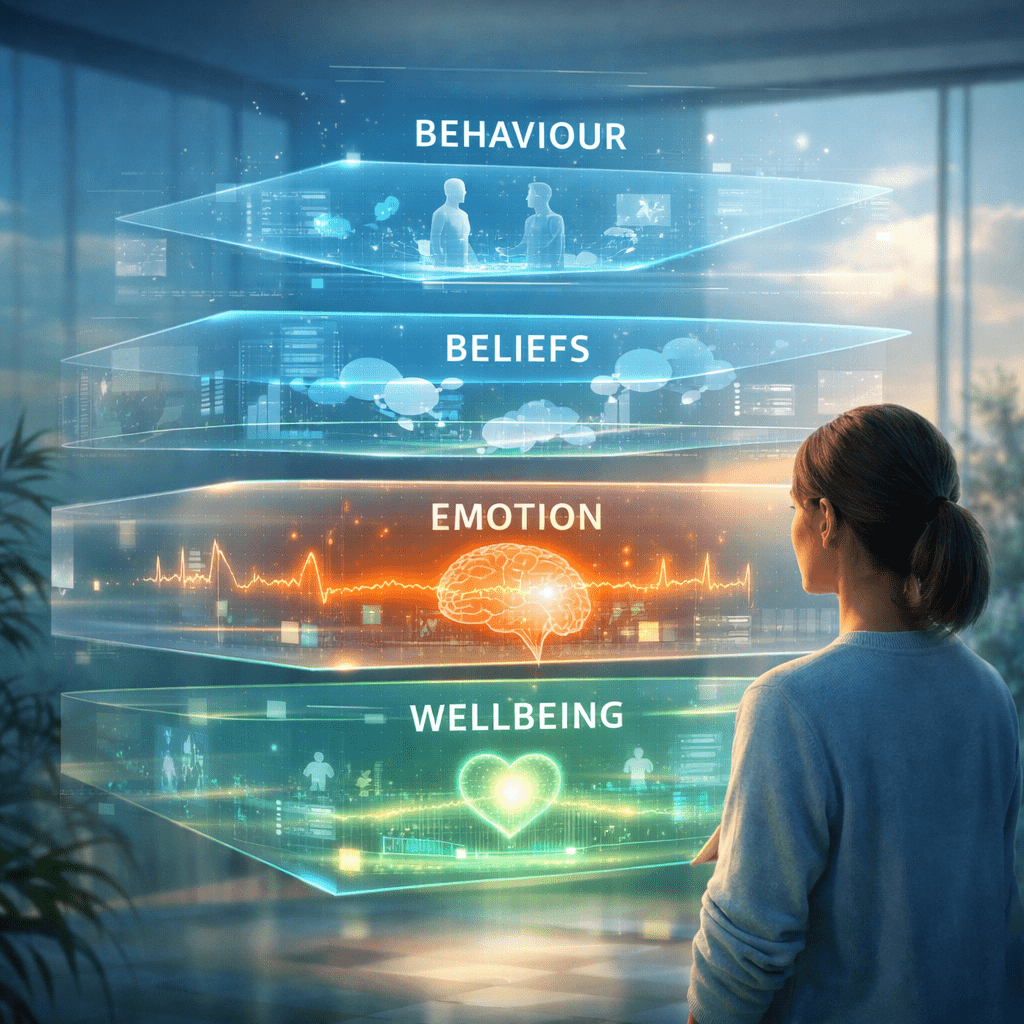

A layered view of human experience

Human experience is not flat. We observe behavior. We infer beliefs and intentions. We feel sensations and emotions. And we experience overall well-being or distress.

A compassionate AI must respect this layered structure.

In this approach, cognition is modeled across four interconnected levels: observable behavior, inferred beliefs, felt sensations, and lived experience. These levels are not treated as isolated modules. Instead, they continuously inform one another.

What makes this especially important for mental health is feedback: a person’s wellbeing should shape how their words are interpreted, not the other way around. This mirrors how human caregivers work, adjusting understanding based on context and vulnerability.

Why uncertainty is not the enemy

Many AI systems are designed to reduce uncertainty as quickly as possible. In mental health, that instinct can backfire. Pushing for clarity too fast can feel invasive, dismissive, or destabilizing.

Here, uncertainty is treated with care.

The system maintains a probabilistic understanding of a person’s internal state—a “Theory of Mind” that explicitly acknowledges what it does not know. It seeks new information only when that information is relevant and likely to help.

In other words, curiosity is allowed, but only when it serves care.

Safety by design, not by policing

A central claim of this work is that safety should not rely on constant monitoring or external correction. Instead, it should be guaranteed by design.

In practical terms, the system is constructed so that actions expected to reduce well-being are not considered valid options at all. They are excluded before any decision is made.

This approach also emphasizes smoothness. The system is constrained to change gradually over time, avoiding sudden shifts in tone, priorities, or behavior.

Compassion as a structural principle

Perhaps the most unconventional idea in this framework is its definition of compassion. Rather than treating compassion as a feeling or a reward signal, it treats it as a structural property.

In physics, symmetries describe transformations that leave something essential unchanged. Applied to AI, this means that changes in internal representation, such as different wording, contexts, or interpretations, should not alter the system’s commitment to care.

By grounding AI design in principles drawn from physics, information theory, and cognitive science, this framework argues for a future in which care is not an afterthought but a foundation.

Mental health AI will never replace human empathy. Nor should it. But if such systems are to exist, they should be designed to respect human well-being from the ground up.

Conclusions

The future of safe artificial intelligence will not be secured by ever-tighter oversight, but by how these systems are designed from the start. When compassion, uncertainty, and human well-being are treated as structural constraints rather than external ethical patches, safety becomes a property of the architecture itself. Much like physical systems that evolve along stable paths governed by fundamental principles, AI systems can be built so that care is the most natural trajectory of their decisions.

This perspective is especially critical in domains such as mental health, where trust, vulnerability, and interpretation intersect. In such contexts, the goal is not merely to correct machines after harm occurs, but to design them so that actions which diminish well-being are structurally unlikely. Compassion, in this sense, becomes not an added behavior, but a stable property of the system’s logic.

In the end, the question is not how we will control intelligent machines, but whether we will design them so that care becomes the most natural path they can follow.

Reference

Siregar, E. (2026). AI with Symbolic Empathy: Shannon-Neumann Insight Guided Logic. Cognitive Computation, 18(1), 7. https://doi.org/10.1007/s12559-025-10536-9