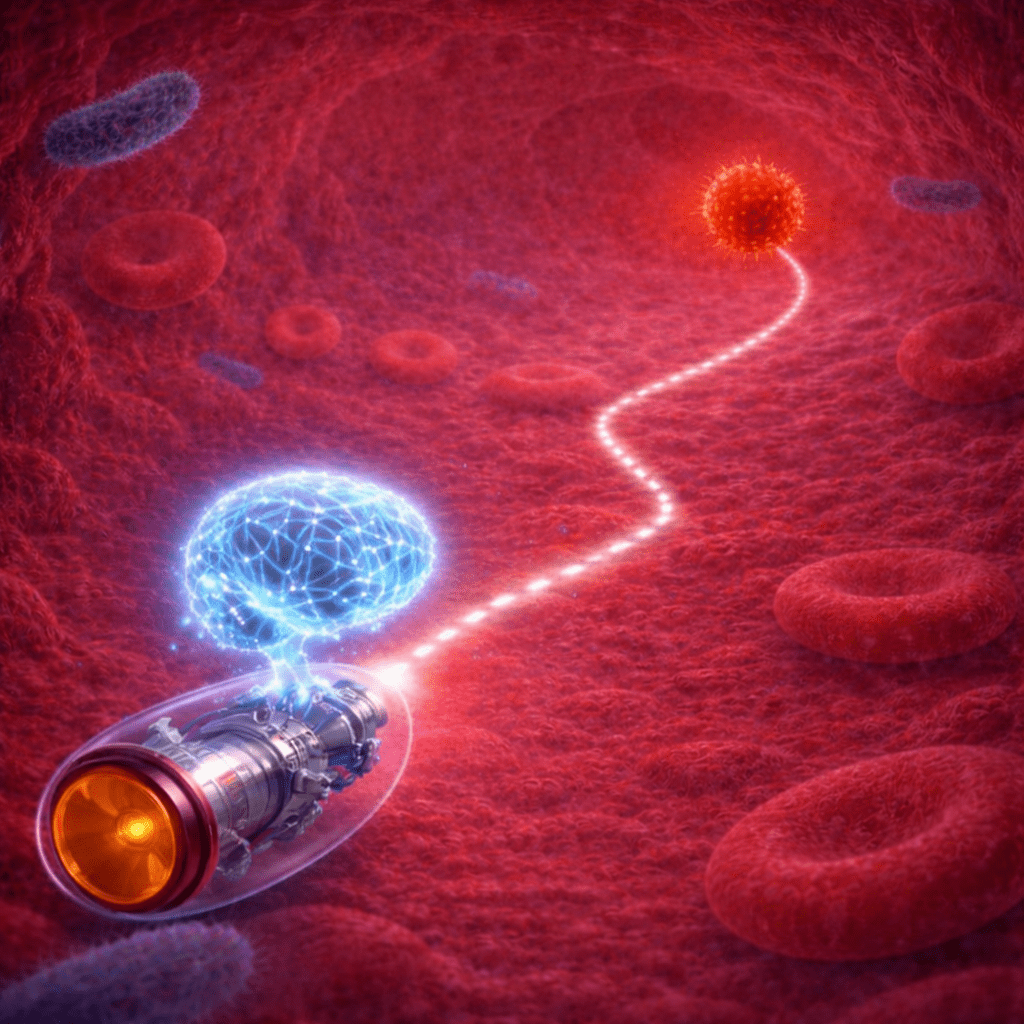

Microrobots show great promise in biomedicine and environmental remediation, particularly for non-invasive access to confined regions within the human body for targeted drug delivery and diagnostics. Yet, as these applications become more complex, a familiar and formidable challenge continues to emerge: control. Precise control in complex, dynamic environments remains a significant engineering challenge.

While advancements in intelligent control systems have been made, they often suffer from sample inefficiency and prolonged training times. Microrobots must navigate confined microenvironments where accurate physical modeling is difficult, and training deep reinforcement learning (DRL) algorithms directly in physical systems is time-consuming and resource-intensive.

A study led by Prof. Tingting Yu at the South China University of Technology, published in Advanced Intelligent Systems, explores this strategy in detail. The research article, titled “Adaptive Autonomy in Microrobot Motion Control via Deep Reinforcement Learning and Path Planning Synergy,” presents a data-driven training strategy that combines supervised learning, model-free DRL, and classical path planning to provide compelling evidence that these limitations can be overcome.

Overcoming the sample efficiency challenge

Traditional approaches to microrobot control often rely on precise physical modeling, but building accurate kinematic and dynamic models in complex environments is extremely challenging. On the other hand, while DRL allows agents to learn optimal policies through interaction, it is highly sample-inefficient when applied directly to physical systems.

To address these issues, the authors introduce a novel framework. The process begins with limited training of a Soft Actor-Critic (SAC) agent in a physical microrobotic system. During this phase, interaction data are collected prior to full policy convergence. These data are then used to train a supervised artificial neural network (SuANN) that emulates microrobot-environment dynamics.

The resulting SuANN serves as a surrogate environment. This design is critical, as it enables efficient training and benchmarking of multiple DRL algorithms without the need for further time-consuming physical data collection. Among the evaluated algorithms, Truncated Quantile Critics (TQC) demonstrated the best performance and was selected for integration with an A* path planning algorithm to achieve autonomous navigation.

Dual-loop architecture: When planning meets learning

The study not only addresses training efficiency but also introduces a structured perspective on autonomy in microrobotic systems. In this framework, perception, path planning, and motion control are handled autonomously through a closed-loop DRL–A* architecture.

This system employs a global–local control strategy. The integrated A* planner dynamically recomputes collision-free paths at each time step. Meanwhile, the DRL controller provides low-level motion control to accurately track the generated waypoint sequence.

Validation in complex dynamic environments

To validate this framework, extensive experimental validation was conducted using a 280 µm cubic magnetic microrobot actuated by a custom electromagnetic coil system and tracked via optical microscopy. The system was evaluated across a range of environments, including obstacle-free workspaces, maze-like structures, slope-integrated mazes, and capillary-shaped channels that mimic vascular geometries.

Quantitative results demonstrate that the SuANN-based training paradigm significantly improves efficiency and performance. Training time in the physical environment was reduced from approximately 35 hours to about 7 hours, while the required physical interaction data were reduced by more than 50%.

When evaluated in real-world environments, the TQC-SuANN model demonstrated superior robustness. Compared to the baseline SAC model trained entirely in the physical system, TQC-SuANN reduced trajectory deviation by 30.69% and increased task completion speed by 23.43%. Furthermore, it exhibited lower reward variance and smoother trajectories.

Implications for future biomedical applications

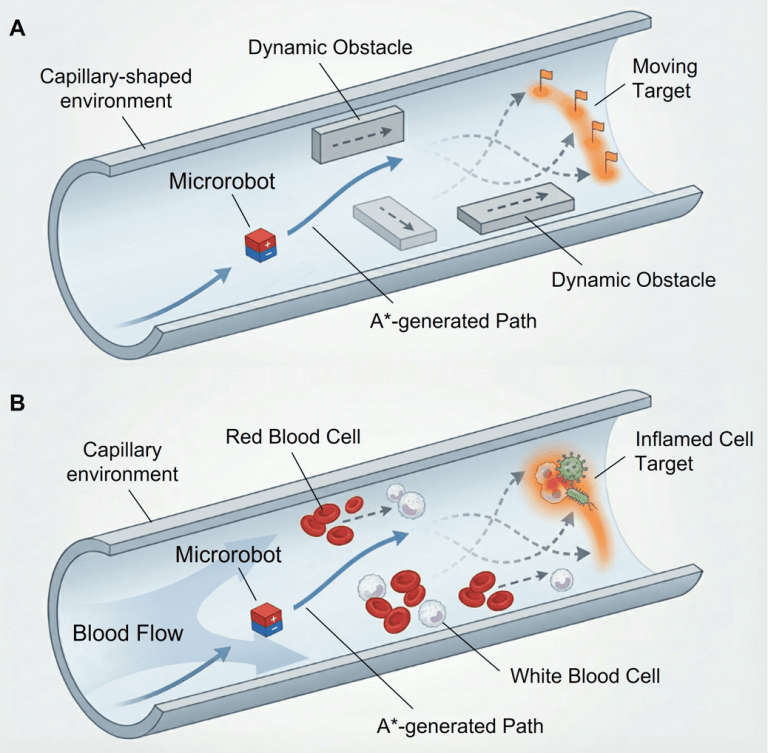

The benefits of the proposed framework are most evident in complex environments with dynamic elements. In highly challenging scenarios involving moving obstacles, moving targets, or both (Figure 1A), the microrobot consistently avoided collisions and converged on the target.

Our framework shows that combining data-driven learning with classical path planning can dramatically reduce the need for expensive physical training while improving navigation performance. This represents an important step toward practical medical microrobots that can safely operate inside the human body.

—Amar Salehi

In environments featuring a moving target, the system successfully reformulated the navigation task as a pursuit problem, continuously updating the path and control actions in real time. This ability to chase and reach moving targets while avoiding dynamic obstacles represents a significant step toward the practical deployment of autonomous microrobots in biomedical and other real-world applications.

Future work may extend this framework from artificial capillary-shaped workspaces to real biological capillary environments (Figure 1B). In such settings, the microrobot must operate in more complex, uncertain environments, contending with dynamic biological obstacles, such as red and white blood cells, while interacting with biological targets, such as inflamed cells. Within this context, the proposed autonomous control architecture is envisioned to enable the microrobot to avoid obstacles robustly, continuously adapt its trajectory, and accurately reach moving targets to execute predefined tasks, such as targeted drug delivery.

Reference

Salehi, A., Zhu, B., Liu, H., & Yu, T. (2026). Adaptive Autonomy in Microrobot Motion Control via Deep Reinforcement Learning and Path Planning Synergy. Advanced Intelligent Systems, 202501053. https://doi.org/10.1002/aisy.202501053

Coauthors

Tingting Yu received the B.Sc. degree in computational physical chemistry and the M.Sc. degree in chemical engineering and physical chemistry from the Technology University of Darmstadt, Darmstadt, Germany, in 2012 and 2015, respectively, and the Ph.D degree in physical chemistry from University of Stuttgart, Stuttgart, Germany, and Max-Planck-Institute for Intelligent Systems, Stuttgart, Germany, in 2021.,She is currently an Associate Professor at the Shien-Ming Wu School of Intelligent Engineering, South China University of Technology, Guangzhou, China. Her research interests include micro/nano robotics and fabrication.

Bairong Zhu graduated from the School of Mechanical and Aerospace Engineering at Jilin University, Changchun, China, in 2023 with a Bachelor’s degree in Mechanical Engineering. He is currently pursuing a Ph.D. at the Shien-Ming Wu School of Intelligent Engineering, South China University of Technology. His research interests include intelligent micro- and nanorobots, deep reinforcement learning and control, and microfluidics simulation and modeling.